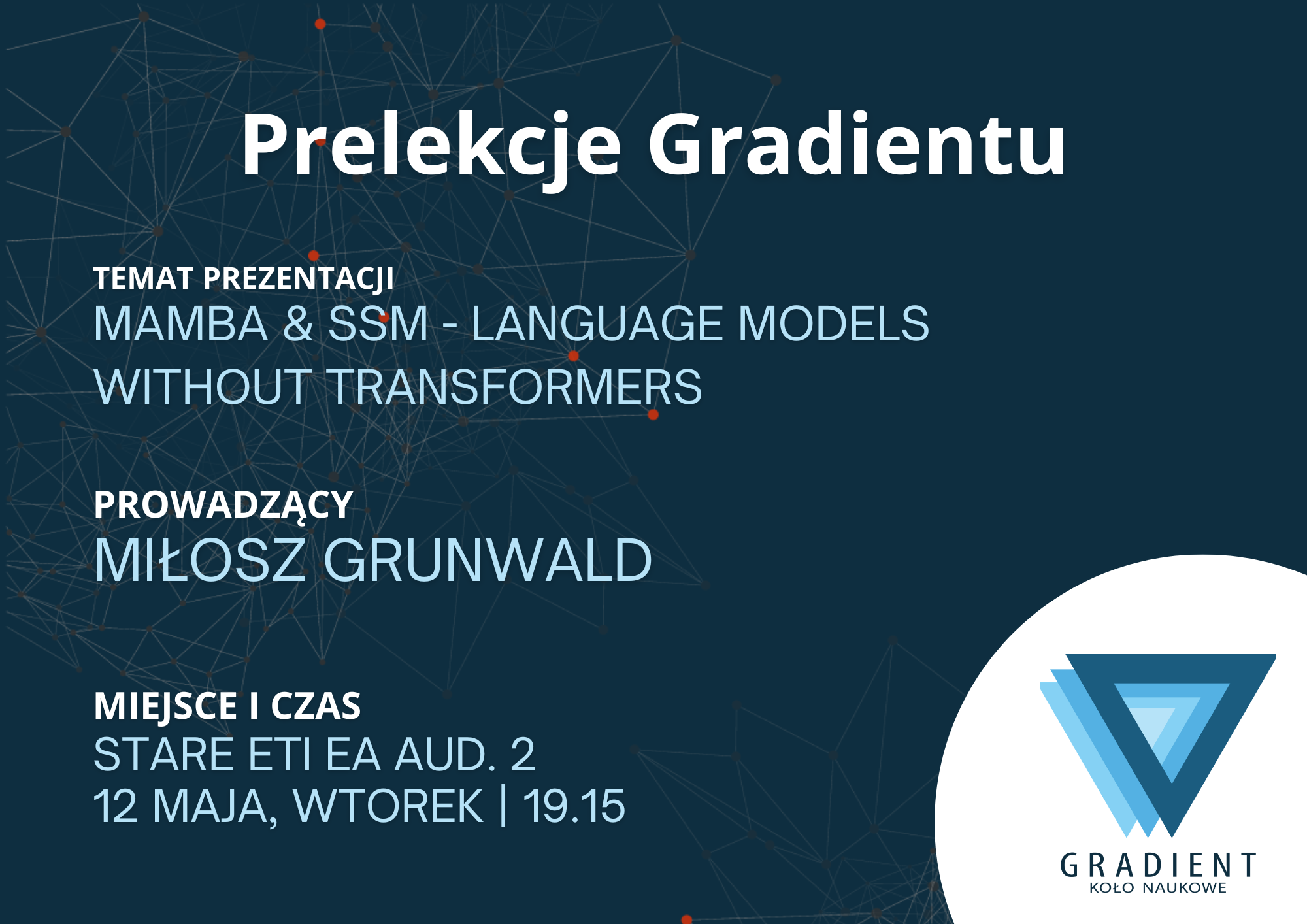

About this event

While everyone is talking about GPT, a new architecture is quietly shaking up the AI world. Join us for a session on Mamba and State Space Models (SSM), the tech that might finally solve the "memory problem" of modern AI. We will talk about: - Why Transformers get slow with long texts. - How Mamba manages to stay lightning-fast. No matter if you're an ML Engineer or just curious where the field is heading, grab a seat and let’s talk about the future of LLMs!

Goal

Knowledge Sharing.Who it is for

Domains

Attendees

People already in this event

Registered participants with public profiles will appear here. Emails and private attendee records stay hidden.

Content pipeline Bring a demo, paper, problem, or prototype Profile required

Submit something specific organizers can curate: a working demo, research problem, paper, dataset, method, prototype, or failure story. Every contribution is connected to a public LabConnectors profile so organizers can build a real talent pool around the event.

Create a profile to submit

Contributions are only accepted from logged-in profiles. Your profile gives organizers the context they need to curate demos, research problems, papers, and pilots.

Participant signal Register Login required

Joining creates a participant signal tied to your LabConnectors profile, useful for organizer curation, company discovery, investor search, and post-event follow-up.

Create a profile to register

Public event pages are open, but participation is reserved for logged-in profiles. This makes every RSVP part of the LabConnectors talent pool instead of a throwaway email capture.